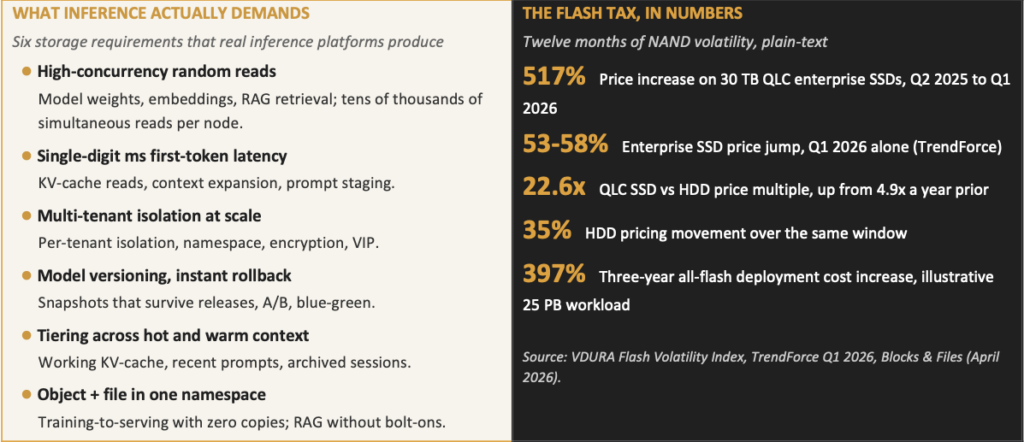

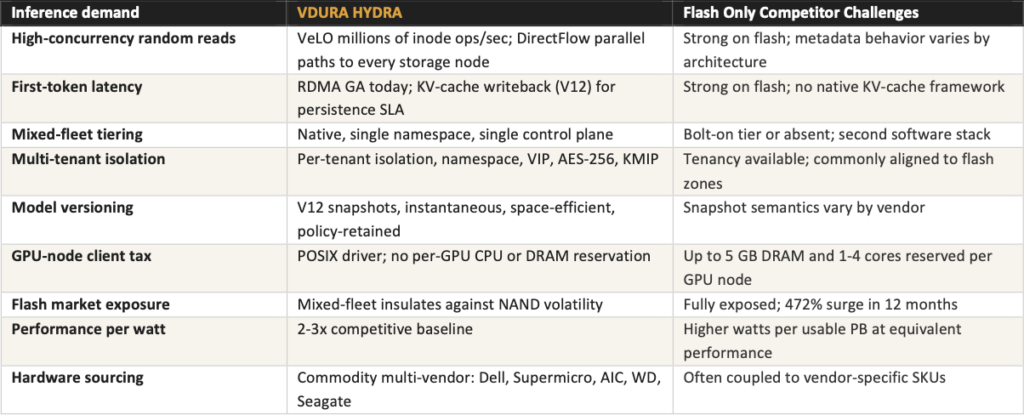

Two storage vendors have spent the last eighteen months convincing the market that AI inference is an all-flash problem. It is not. Inference is a workload-shape problem, and the shape is mixed: large model libraries, hot KV-cache, growing RAG corpora, multi-tenant traffic, and a versioning surface that compounds every release. The architectures that win the next decade of inference look like what Hyperscalers already run in production. They do not look like all-flash silos.

Google’s Colossus, Meta’s Tectonic, and Microsoft’s Azure storage stacks are software-defined, mixed-fleet, and tiered. Flash is a performance medium, not a capacity medium. They run inference at a scale that dwarfs any AI factory deployed today, and they do not buy all-flash. The all-flash narrative contradicts every hyperscaler architecture paper published in the last five years. The Neocloud and AI-factory market has been told a different story, and that story is now colliding with NAND supply economics.

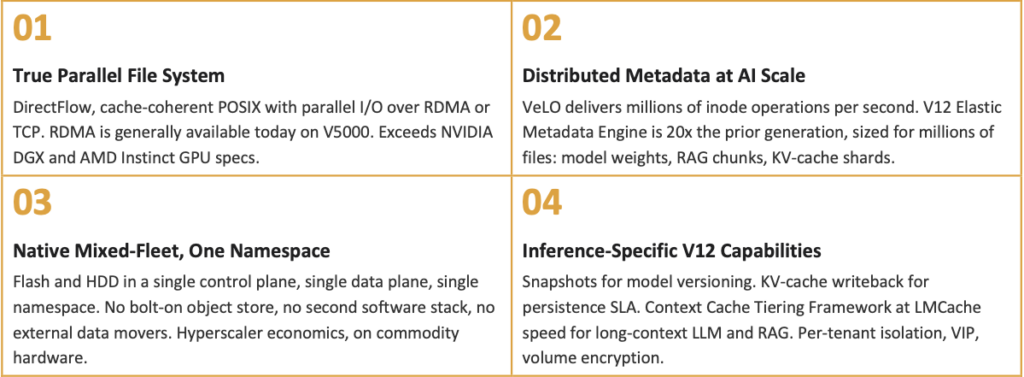

Four architectural properties that no all-flash competitor delivers in one stack

Inference is not an all-flash problem. The vendors who positioned themselves as the inference platform built fast all-flash storage. That is a useful product. It is not a defensible inference architecture in 2026, when flash is volatile, KV-cache demands intelligent tiering, model libraries are growing into petabyte territory, and multi-tenant inference platforms must isolate hundreds of customers on the same fleet. VDURA delivers an inference architecture that uses flash for GPU performance, and HDD capacity for AI data scale, in one namespace, one control plane, one data plane.