By Petros Koutoupis, Product Manager, VDURA , Feb 26, 2026 (LinkedIn Blog Post) – As HPC and AI environments continue to grow, storage teams are under constant pressure to deliver greater performance and capacity while keeping costs under control. One attractive option is to reuse SSDs from older systems or retired clusters and drop them into new deployments. And why not? SSD prices have been rising recently due to strong demand from AI and cloud deployments, as well as a decrease in NAND flash supply. On paper, this looks like a quick win. The drives still work, the price is right, and flash is flash, right?

In practice, reusing SSDs in high performance computing and AI environments often creates more problems than it solves. These workloads make storage more demanding than most enterprise applications, and reused flash quickly exposes its vulnerabilities. Below are some of the most common issues faced by teams when attempting to reuse SSDs in demanding environments.

Unknown Wear and Endurance History

Flash memory has a limited number of program and erase cycles. With rebuilt drives, that history is often incomplete or completely unknown. A drive may appear healthy but is already deep into its wear budget. When write-heavy AI pipelines or checkpoints are dropped in intensive HPC workflows, remaining stamina can rapidly disappear, leading to unexpected failures.

Enterprise SSDs generally offer between 1,000 and 3,000 P/E cycles, while consumer TLC and QLC drives may offer as few as 100–500 P/E (program/erase) cycles depending on NAND type. This means drives that have already been used can have very limited remaining endurance when redeployed.

Unpredictable Performance

As NAND degrades, performance behavior changes. Garbage collection may become more aggressive, write amplification may increase, and sustained write throughput may drop rapidly under load. For AI training jobs or parallel file systems that rely on consistent performance, this can translate into difficult to diagnose noisy neighbors, stalled jobs, or slowdowns that only become visible at scale.

Reduced Reliability and Higher Failure Rates

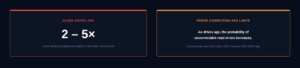

The aging of flash also means higher bit error rates. While modern SSDs rely heavily on error correction to hide this, there is a limit to how much correction is possible. As drives age, the probability of uncorrectable read errors increases, which is especially risky for large datasets, checkpoints, and long-running simulations where data integrity is critical.

Flash reliability data shows uncorrectable read error rates (URE) increase with NAND age, with older drives being 2–5× more likely to experience latent errors than new drives.

Inconsistent Behavior Across Drives

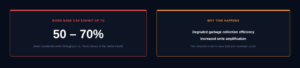

Repurposed SSDs rarely come from a single, clean batch. They often differ in firmware version, usage patterns, and workload history. Mixing drives that were previously used for caching, logging, or read- heavy workloads can result in uneven performance and reliability at the same storage level. In a clustered environment, that inconsistency can propagate outward and affect overall system behavior.

Performance tests of mixed-age SSD fleets reveal that worn NAND can exhibit up to 50–70% lower sustained write throughputcompared to fresh drives of the same model. This reduction is tied to degraded garbage collection efficiency and increased write amplification.

Limited or Voided Vendor Support

Many SSD vendors do not support redeployed or repurposed drives, especially in enterprise and data center environments. This can complicate troubleshooting when problems arise and make RMA difficult or impossible. Without vendor support, storage teams are left responsible for the risks and operational consequences when things go wrong.

Firmware and Compatibility Issues

Drives that were previously tuned or optimized for a specific workload may not behave as expected when moved to a different environment. Firmware settings, internal wear leveling strategies, or power loss protection behavior may all differ. In HPC and AI systems that rely on tight integration between storage, networking, and compute, these mismatches can create subtle but serious problems.

Security and Data Sanitization Risks

Reusing drives also raises data security concerns. If secure erase procedures are incomplete or performed incorrectly, residual data may remain on the device. For organizations operating under compliance or data privacy requirements, this can create unnecessary and potential risks.

Higher Operational Overhead

Repurposed SSDs require more effort to manage. Teams need to closely monitor health metrics, track wear levels, and proactively identify drives that are approaching failure. This additional overhead adds complexity to operations and increases the possibility of human error, especially at large scales.

Shortened Service Life

Even when repurposed drives initially appear to work well, their remaining service life is often limited. This leads to more frequent replacements, increased maintenance windows, and greater potential for disruption during critical workloads.

The Illusion of Cost Savings

While reusing SSDs can reduce upfront capital costs, those savings often dissipate over time. Increased failure rates, unpredictable performance, higher operational burden and potential downtime all add up. In many cases, the total cost of ownership exceeds that of deploying new, workload-tailored flash from the start.

Final Thoughts

HPC and AI workloads place unique and constant demands on storage systems. They expect consistent performance, predictable behavior, and high reliability at scale. Refurbished SSDs, with their unknown histories and uneven characteristics, rarely meet those expectations for long. Although the idea of reusing may be attractive, the risks often outweigh the rewards. For environments where performance and stability really matter, purpose-selected flash with known endurance and support remains the safer and more cost-effective choice in the long run.

About the Author

Petros Koutoupis has spent more than two decades in the data storage industry, working for companies which include Xyratex, Cleversafe/IBM, Seagate, Cray/HPE and now, VDURA. In addition to his engineering work, he is a technical writer and reviewer of books and articles on data storage and open-source technologies and has previously served on the editorial board of Linux Journal magazine.

About VDURA

VDURA builds the world’s most powerful data platform for AI and high-performance computing, blending flash-first speed with hyperscale capacity and 12-nines durability, all delivered with breakthrough simplicity. Visit vdura.com for more information.

The future of HPC and AI is still being written and VDURA is at the forefront, driving innovation.